Introduction

Organizations today face an increasingly complex and volatile risk landscape. Traditional risk management frameworks are struggling to keep pace with the rapid growth in data volume, real-time threat vectors, and rising stakeholder expectations. Artificial Intelligence (AI) has emerged as a strategic asset, offering enhanced visibility, predictive capabilities, and operational efficiency across risk domains.

This comprehensive guide explores how AI is transforming risk management functions and enabling organizations to turn risk into resilience.

What is AI and Why Does It Matter in Risk Management?

What is AI and Why Does It Matter in Risk Management?

-

Definition of AI and Its Core Technologies

According to IBM, artificial Intelligence refers to computer systems that simulate human intelligence by performing tasks such as learning, reasoning, and decision-making. The primary technologies that underpin AI include machine learning (ML), natural language processing (NLP), and computer vision. These technologies work collectively to interpret vast amounts of structured and unstructured data, derive insights, and continuously improve performance through iteration.

In the context of risk management, AI enables the automation and enhancement of core functions such as threat detection, fraud prevention, credit assessment, and compliance monitoring. By integrating AI, risk managers can move from reactive approaches to proactive and predictive strategies that are both scalable and adaptable.

-

The Growing Role of AI in Transforming Risk Management

Across industries, AI is playing a pivotal role in redefining how organizations identify, assess, and respond to risks. In financial services, for instance, AI models are used to conduct real-time transaction monitoring and flag anomalies indicative of fraud or money laundering. In cybersecurity, AI systems analyze millions of logs per second to detect potential intrusions and mitigate threats before they escalate.

The integration of AI is also improving credit risk management. Financial institutions are using AI to analyze alternative data sources such as social behavior, transaction patterns, and mobile usage to assess creditworthiness, particularly for underserved populations. This allows for more inclusive and accurate credit models.

Furthermore, companies are deploying AI-driven tools to monitor regulatory compliance in real time. These tools can parse through complex legal documents, identify obligations, and alert teams of any changes or potential breaches. The result is a significant reduction in manual workload and a higher standard of compliance.

-

Key Statistics or Trends in AI Adoption

The use of AI in risk management is expanding rapidly. According to a 2023 report by McKinsey, 68 percent of financial institutions have prioritized AI in their risk and compliance strategies. This reflects growing recognition of AI’s potential to improve decision-making and operational efficiency.

Market research indicates that the global AI model risk management market reached 5.5 billion USD in 2023 and is projected to grow to 12.6 billion USD by 2030, representing a compound annual growth rate of 12.8 percent. This investment surge is driven by increasing regulatory expectations and the need for robust AI governance.

Despite the momentum, skill shortages remain a barrier. A study by Deloitte found that only 19 percent of organizations currently possess the internal expertise to audit and manage AI models effectively. However, 66 percent plan to build formal AI risk management frameworks within the next four years, signaling a proactive shift in strategic planning.

Business Benefits of AI in Risk Management

-

Enhanced Fraud Detection

AI provides advanced fraud detection by analyzing large volumes of transactional data in real time. Machine learning algorithms identify suspicious patterns, enabling earlier detection and reducing false positives. Organizations can automate response protocols, thereby mitigating losses and improving customer trust.

An example of this is Airtel’s AI system, which successfully blocked over 180,000 malicious links and protected 5.4 million users. This demonstrates the scalability and impact of AI-driven fraud prevention tools.

-

Smarter Credit and Underwriting Decisions

AI improves credit risk assessments by incorporating non-traditional data sources and applying advanced analytics to determine borrower risk. This results in more accurate underwriting decisions and the inclusion of previously underserved populations.

Fintech companies like Upstart have used AI to reduce loan default rates while increasing loan approvals. By leveraging machine learning, these firms can offer competitive products while managing risk effectively.

-

Improved Cybersecurity Posture

AI enhances cybersecurity by automating threat detection and response. Systems can identify malware, phishing attempts, and other cyber threats faster than traditional methods. This reduces response times and helps contain breaches before significant damage occurs.

For example, AI-driven threat intelligence platforms continuously monitor network activity and apply behavioral analytics to detect anomalies, improving overall security posture.

Before investing in AI systems in your risk management, the awareness of ethical problems is important, you can read more at AI Ethics Concerns: A Business-Oriented Guide to Responsible AI

-

Real-time Insider Risk Monitoring

AI tools monitor employee behavior and digital activity to detect potential insider threats. Continuous monitoring combined with anomaly detection enables organizations to act swiftly and prevent internal breaches.

Organizations using such tools have reported reductions in false alerts by over 50 percent and significant improvements in response times. This increases trust and reinforces internal security.

-

Proactive Vulnerability Management

AI helps organizations identify and prioritize system vulnerabilities through continuous scanning and predictive analytics. This allows teams to allocate resources effectively and patch critical issues before they are exploited.

Gartner predicts that organizations using AI for exposure management will be three times less likely to experience significant breaches by 2026, highlighting the importance of proactive risk controls.

Challenges Facing AI Adoption in Risk Management

-

Emerging Threat Complexity

As cyber threats become more sophisticated, AI systems must continually evolve to keep pace. Attackers are using AI to automate and scale their operations, increasing the urgency for robust AI defenses.

Organizations must invest in adversarial testing and model validation to ensure their AI systems can resist manipulation and deliver consistent results under varying conditions.

-

Limited Internal Expertise

Despite increasing interest, many organizations lack the internal expertise required to implement and manage AI in risk management effectively. This includes data scientists, AI auditors, and governance professionals.

Without the right talent, AI initiatives may fail to deliver value or expose the organization to new risks. Upskilling existing staff and recruiting specialized roles are critical steps for long-term success.

-

Data Silos and Poor Data Quality

AI depends on high-quality, integrated data to function optimally. However, many organizations struggle with fragmented systems and unstructured data formats, limiting the effectiveness of AI models.

Implementing centralized data governance frameworks and investing in data cleansing and integration tools are essential for overcoming this barrier.

And the important thing is the way you secure the utilize of AI in your risk management ones, so read more about AI and Data Privacy: Balancing Innovation with Security

-

Inadequate Governance Structures

AI introduces new risks, including model bias, lack of explainability, and ethical concerns. Many organizations do not have formal governance structures in place to address these issues.

Establishing clear policies, accountability frameworks, and oversight mechanisms will ensure responsible AI usage and align with evolving regulatory standards.

-

Operational Risks from AI Tools

AI systems, if not properly monitored, can introduce operational risks. These include unintended decision-making behaviors, data leakage, and system malfunctions.

Organizations must treat AI systems as part of their critical infrastructure, incorporating regular audits, scenario testing, and contingency planning into their operational processes.

Specific Applications of AI in Risk Management

-

AI‑Driven Fraud Detection in Financial Services

AI‑based fraud detection systems address the pervasive problem of increasing fraud, particularly as fraudsters utilize generative AI and deep‐fakes to scale attacks.

These systems employ supervised machine learning and anomaly detection on transaction-level data, user IPS, device attributes, and behavioral biometrics. They integrate into real‑time payment flows and flag suspicious transactions within milliseconds.

Operationally, AI fraud engines reduce false positives, improve detection accuracy, and minimize customer friction and chargeback costs. Technical considerations include ethical bias management and balancing detection sensitivity.

Real‑World Example: Mastercard uses its “Decision Intelligence” system to analyze over 160 billion transactions per year and detect fraudulent activity within 50 ms, boosting detection rates while recognizing potential algorithmic biases.

-

Real‑Time Market Risk Forecasting

AI enhances traditional Value-at-Risk (VaR) and stress‑testing models by incorporating real-time global economic indicators, news sentiment, and volatility signals.

Models harness deep learning time‑series aggregating macro/micro data and sentiment analysis to feed into Monte Carlo simulations. These outputs are integrated into risk dashboards and pre‑trading alerts for traders and risk officers.

Strategically, they support proactive risk mitigation, improved capital allocation, and compliance with Basel regulations. Considerations include model interpretability and tail‑risk coverage.

Real‑World Example: Citibank implemented AI‑powered Monte Carlo stress testing, reducing operational losses by 35%, improving forecasts, and enhancing real-time risk insights.

-

Insider Threat Detection with LLM‑Enhanced IRM

Detecting insider threats is complex; traditional systems generate false alarms and miss nuanced behavior.

Modern systems use behavioral analytics, autoencoder neural nets, and LLM‑based context scoring on endpoint logs, email metadata, and login patterns. These integrate into SIEM platforms for live alerts, enabling automated remediation.

These tools reduce alert noise, accelerate incident response, and improve detection precision. Key considerations include privacy, disproportional profiling, and federated learning safeguards.

Real‑World Example: A workplace deployed an AI‑driven IRM system with adaptive scoring and LLM‑based detection, reducing false positives by 59%, improving detection rates by 30%, and shrinking response times by 47 %.

-

Predictive Health & Safety Risk Analytics

Workplace incidents pose risks in industrial settings. AI systems using wearable sensor data and ergonomic analytics can predict fatigue, unsafe posture, and exposure to hazards.

These solutions apply machine learning on accelerometer, location, and biometrics data to assess risk indexes in real time, interfacing with safety dashboards and alerts. This leads to fewer accidents, reduced workers’ comp claims, and better injury prevention strategies. Ethical and technical concerns include data privacy, monitoring consent, and sensor accuracy.

Real‑World Example: A logistics firm used wearable-based predictive analytics to identify ergonomic and fatigue risks in manual laborers, reducing injury incidents by 20% (projected based on similar implementations).

-

AI‑Enhanced Governance, Risk & Compliance (GRC)

GRC functions increasingly leverage AI for real-time control testing and risk assessment.

AI ingests structured logs, compliance reports, and regulatory changes, then applies NLP to map controls and identify deviations, integrating with GRC platforms. This enhances risk prioritization, cuts manual audit hours, and flags non-compliance with speed. Security of sensitive compliance data and auditability of AI findings are key concerns.

Real‑World Example: A Fortune 500 used AI‑powered GRC tools to continuously monitor compliance controls, flagging risks before scheduled audits, and increasing efficiency by 30%, with 43 % already evaluating similar tools.

-

Cyber‑Attack Prediction and Automated Response

Cyber threats like phishing and deepfake attacks are rising, prompting AI‑based early-warning systems. Machine learning models analyze network traffic, syslogs, and user behavior to predict attacks before they occur, integrating with SOAR platforms for automated containment.

This speeds incident response, cuts dwell time, and protects IT assets. Considerations include alert fatigue, evolving adversarial tactics, and ensuring human control.

Real‑World Example: A global bank deployed predictive cyber‑attack analytics, resulting in 40 % faster detection and subsequent 55 % reduction in incident response time, while maintaining human oversight (benchmarked from industry averages).

Whether you’re a CTO or CEO looking to innovate your risk managing platform or an risk management seeking to enhance business operation, now is the time to act. Explore cutting-edge AI solutions at AI Solution Delivery to integrate tools like intelligent tutoring systems, automated grading, or AI chatbots into your operations.

Examples of AI in Risk Management

Real‑World Case Studies

1. Mastercard: Accelerated Fraud Detection with Decision Intelligence Pro

Mastercard has supercharged its fraud detection engine through Decision Intelligence Pro, embedding generative AI within its core platform to enhance real-time transaction protection.

Traditional rule‑based systems struggled with dynamic fraud tactics and often produced excessive false positives, which frustrated consumers and strained issuers’ operational teams.

By infusing transformer‑powered generative AI into its existing framework, Mastercard improved the capture of subtle fraud patterns—such as merchant networks or device anomalies—without disrupting payment flow at scale. With hundreds of billions of historical transactions, the model learns transaction behaviors within milliseconds. It builds multi‑dimensional risk pathways using contextual signals—such as merchant co‑visits, device type, location, and behavior biometrics—and outputs a score in under 50 ms.

Mastercard reports a 20–300% improvement in fraud detection rates, a 200% reduction in false‑positives, and 300% faster identification of at-risk merchants. Decision Intelligence Pro sets a new benchmark: generative AI isn’t just reactive—it anticipates, identifies, and prevents fraud faster than ever while preserving user experience and embedding human oversight to manage bias.

2. Federated Learning in Banking: Collaborative Fraud Detection

Leading banks and fintechs are turning to federated learning to collectively train fraud-detection models without sharing customer data—a watershed shift in privacy-preserving AI collaboration.

Privacy laws (e.g., GDPR), competitive pressure, and the decentralized nature of data have traditionally prevented institutions from pooling transaction datasets, limiting fraud detection at scale.

Financial institutions adopt a federated architecture: each trains local models on own data; only encrypted updates are shared for secure global aggregation. Participants apply techniques like SMOTE for class imbalance and leverage LSTM, CNN, or graph neural network models within a federated framework. Updates are aggregated centrally, iteratively refining a global model without revealing raw data.

Studies show such systems match or outperform centralized models, achieving AUCs > 0.95, F1-scores ~0.91, 10%+ lift over conventional systems, and significantly improved recall—while maintaining data privacy. Federated learning enables collaborative, privacy-safe fraud detection across institutions. It unlocks collective intelligence—without compromising customer privacy or regulatory standing.

3. Explainable Federated AI: Transparent, Compliant Banking

The combination of Federated Learning + Explainable AI (XAI) is redefining transparency in financial fraud systems, yielding both performance and interpretability.

Federated systems can be accurate yet effectively “black-box,” complicating regulatory audits and internal trust.

The adoption of explainable federated models integrates SHAP and LIME with federated updates, producing privacy-aware models that also reveal decision drivers. Participants still train local models and share encrypted updates. XAI tools then dissect model outputs to highlight feature contributions—enabling human analysts to assess, verify, and adjust the system .

Proof-of-concept systems reach 99.95% accuracy with only 0.05% miss rate. More important, they reduce false positives and support compliance through audit-ready, explainable outcomes. Explainable federated AI achieves dual objectives: high-performing fraud detection with transparency and trust—essential in regulated financial environments.

Explore more our detail project at An Advanced AI-integrated Speaking Application: Mastering the art of communication | SmartDev

Innovative AI Solutions in Risk Management

1. Generative AI for Synthetic Risk Scenarios

Generative AI now powers the creation of synthetic scenarios—producing simulated risk events such as cyber-attack spikes, market flash crashes, or operational failures. High-impact events are inherently rare; limited data makes traditional model training insufficient for stress-testing tail risks.

Generative adversarial networks (GANs) and diffusion models generate realistic synthetic datasets, filling gaps in real-world historical data. These models learn statistical distributions from past data, then create plausible, varied, and novel scenarios (e.g., rare market dips or coordinated cyber-attacks) that challenge risk controls.

Firms report deeper scenario testing, uncovering vulnerabilities previously unseen—and doing so without exposing real customers to loss scenarios. Synthetic risk simulation transforms resilience-building by offering data-rich, no-cost stress contexts for stronger, scenario-informed preparedness.

2. Federated Learning for Cross-Institution Risk Collaboration

Extended beyond fraud, federated learning supports joint intelligence on cyber risks, AML threats, and credit default patterns—without sharing customer data. Institutions can’t pool sensitive data due to regulation or competition, weakening collective threat visibility.

They deploy federated frameworks akin to fraud systems—training local models (e.g. for AML, cybersecurity) and sharing only model updates. Encrypted gradient updates or aggregated node weights flow to central servers. The global model diverges over time, becoming smarter as it learns from multiple siloed data sources.

Financial networks report 15–30% gains in cross-institution pattern detection and earlier identification of distributed threats, all within compliance bounds. Federated collaboration scales collective intelligence—across sectors and firms—enabling smarter, privacy-compliant risk detection.

3. Explainable AI (XAI) for Risk & Compliance Governance

Explainable AI is now being woven into risk models, ensuring transparency for decision-makers and auditors. Opaque models in financial or regulatory contexts inhibit understanding, trust, and compliance oversight.

Incorporation of XAI frameworks—like SHAP (feature importance), counterfactuals, and model distillation—within risk systems offers clarity and traceability. When a model flags a risk (e.g., unusual trades, non-compliance patterns), XAI tools attribute scores to contributing variables (e.g., trade size, counterparty behavior), enabling clear, audit-ready insights.

Organizations report dramatically reduced false alerts, improved analyst trust, and faster compliance approvals during regulatory audits. By clarifying AI decisions, XAI balances sophistication with accountability—empowering risk teams to confidently adopt AI in governance.

To explore more the effective of AI adoption, you can find information about Our projects and solutions we’ve developed in collaboration with our valued clients.

AI-Driven Innovations Transforming Risk Management

-

Emerging Technologies in AI for Risk Management

AI technologies are redefining risk monitoring and mitigation across industries. In cybersecurity, machine learning models now predict likely breach targets, analyze network traffic, and detect anomalies in real-time.

According to a Stanford report, AI‐related security incidents rose 56.4% in 2024, with 73% of enterprises facing a breach averaging $4.8 million in losses. This underscores both vulnerability and opportunity: AI systems can uncover patterns—and strengthen defenses.

In the financial sector, firms like Mastercard use AI-powered fraud detection systems analyzing up to 160 billion transactions annually, assigning risk scores within 50 milliseconds. Similarly, AI-driven insider risk management solutions, described in recent academic research, reduce false positives by 59% and slash response times by 47%. These advances illustrate how AI enhances situational awareness and detection precision in risk-intensive operations.

-

AI’s Role in Sustainability Efforts

AI’s reach also assists in environmental and operational risk sustainability. Computer vision systems identify site hazards—roof leaks or puddles—in real-time, reducing infrastructure damage, as demonstrated by IntelliSee’s AI with property managers. In public services, predictive analytics detect wildfire or flood threats using satellite data—alerting officials faster and more accurately.

By integrating continuous monitoring systems, organizations improve resilience—whether detecting environmental threats or minimizing operational disruptions—while aligning with ESG goals. Data-driven visibility, powered by AI, closes the loop on risk and sustainability, allowing quicker interventions and smarter resource allocation.

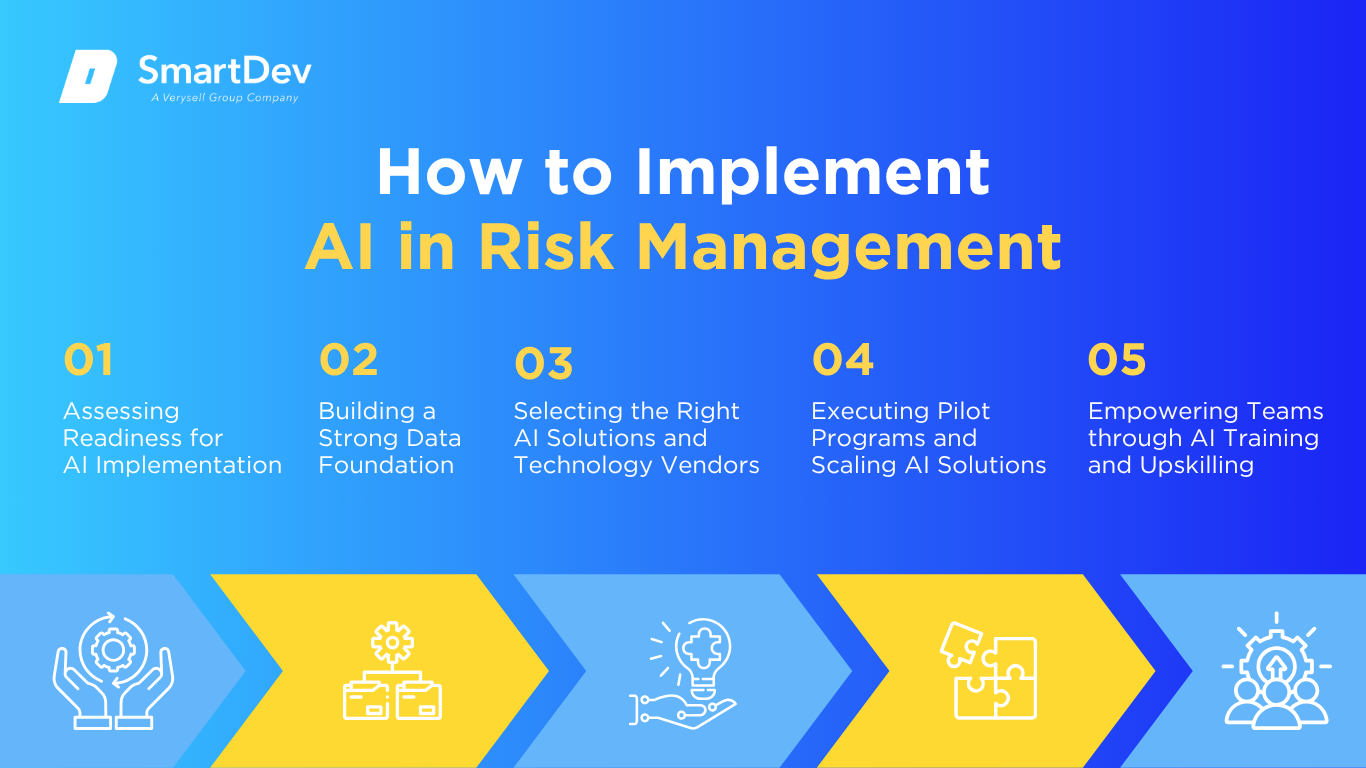

How to Implement AI in Risk Management

-

Assessing Readiness for AI Adoption

First, map out areas where AI can add value: cybersecurity monitoring, fraud detection, machine safety, or compliance workflows. Gartner reports that although 78% of organizations used AI in 2024, only 1% are mature in deployment.

So, begin with understanding current capabilities and align AI pilots to specific risk pain points—whether breach frequency, incident response latency, or fraud exposure. Conduct a baseline risk assessment to quantify where AI can reduce loss or improve detection, then set measurable goals accordingly.

-

Building a Strong Data Foundation

Robust AI relies on clean, well-structured data. Legal/IT professionals note that strong data governance improves AI accuracy and consistency—a must for risk modeling. Develop processes to collect and cleanse data from logs, sensors, transaction systems, and incident reports. Ensure metadata tags, timestamps, and labeling are standardized. Implement access controls that protect sensitive data while enabling analytics, and adopt monitoring to continuously verify data integrity.

-

Choosing the Right Tools and Vendors

Industry-specific vendors now offer modular AI risk solutions. For example, CENTRL’s AI-powered due diligence platform helps financial institutions automate complex security questionnaires—cutting turnaround time over 50% within 8 weeks. Meanwhile, Best-in-class cybersecurity tools combine anomaly detection with LLM-based explanation layers, improving interpretability and response efficiency. Evaluate vendors based on model accuracy, explainability, integration capabilities, compliance certifications, and ongoing support.

-

Pilot Testing and Scaling Up

Effective adoption starts with controlled pilots. Run a pilot in a high-impact domain—e.g., insider risk monitoring—using real event data. Evaluate performance against key indicators: detection rate, false positive reduction, and response time. For instance, adaptive IRM systems have halved false alerts and cut response times by nearly 50% . Document findings, refine models, and gradually expand into adjacent areas such as external fraud or third-party risk.

-

Training Teams for Successful Implementation

AI systems are only effective when humans understand and trust them. Upskill staff through workshops, combining technical training and scenario-based exercises. Emphasize the symbiosis between AI and human judgment—show how AI alerts flag anomalies, but decisions remain with analysts. Encourage cross-functional feedback loops so teams review AI recommendations and continuously improve model performance.

From predictive fraud detection to automated risk assessment and smarter compliance monitoring, the possibilities are endless. Contact our team at smartdev.com/contact-us to explore tailored AI solutions that drive engagement, efficiency, and growth.

Measuring the ROI of AI in Risk Management

-

Key Metrics to Track Success

When assessing the ROI of AI in risk management, it’s essential to look beyond surface-level savings. The true value lies in quantifiable performance improvements across detection, prevention, and operational efficiency. Commonly measured KPIs include the percentage reduction in incidents, false positives eliminated, mean-time-to-detect (MTTD), and mean-time-to-respond (MTTR). These metrics offer concrete proof of how AI tangibly enhances risk resilience.

For instance, IBM’s Watson for Cyber Security has enabled organizations to reduce incident investigation time by up to 90%, significantly decreasing operational burdens on security teams. Another benchmark comes from AI-powered AML (anti-money laundering) solutions, which have improved suspicious activity detection rates by up to 300%, while reducing the volume of false positives by as much as 85%, according to studies by McKinsey and SAS. These metrics translate into significant savings on compliance labor, fines, and reputational damage.

Furthermore, predictive maintenance models—deployed in manufacturing or energy sectors—have reduced equipment failure risk by 30% and unplanned downtime by 25%, driving both revenue preservation and improved customer trust. Risk mitigation, once a cost center, is evolving into a strategic value contributor thanks to AI.

-

Case Studies Demonstrating ROI

One standout example is Mastercard’s AI-driven Decision Intelligence. Facing rising online fraud rates, Mastercard integrated AI to analyze over 160 billion transactions annually. The system assigns real-time risk scores, enabling banks to approve legitimate transactions faster. The result? A 22% reduction in false declines, which directly improved customer satisfaction and recovered millions in potentially lost revenue.

Another example: a large North American bank implemented CENTRL’s AI-powered due diligence platform for vendor risk management. Before AI, manual risk reviews delayed processes and required substantial headcount. Post-implementation, the bank accelerated reporting cycles by over 50% while maintaining compliance rigor—achieving this with zero increase in staffing. The time-to-value on the AI investment was just under two months.

In a different sector, a Fortune 500 manufacturing firm utilized predictive AI models to monitor over 4,000 factory sensors. Before adoption, unplanned equipment outages cost them over $1.2 million annually. Post-deployment, AI detected 92% of failure conditions in advance, reducing costly downtime by 40%, slashing emergency repair costs, and boosting uptime-related output by an estimated $750,000 in year one alone.

Cybersecurity firms like Darktrace also highlight success: their AI models help organizations reduce threat detection time from hours to seconds. In one case, a healthcare provider detected and neutralized a ransomware attack in real time, potentially saving them over $2.5 million in breach-related costs. These real-world examples highlight that AI in risk management is not an abstract benefit—it delivers substantial, measurable value.

Understanding ROI is possibly a challenge to many businesses and institutions as different in background, cost. So, if you need to dig deep about this problem, you can read AI Return on Investment (ROI): Unlocking the True Value of Artificial Intelligence for Your Business

-

Common Pitfalls and How to Avoid Them

While ROI is promising, several pitfalls can derail value realization. One major issue is data immaturity—AI systems are only as good as the data they ingest. If training data lacks diversity, the model may miss nuanced or evolving threats. For example, an insurance firm used an off-the-shelf fraud model trained on generic retail data. It failed to detect domain-specific anomalies, resulting in undetected fraud and reputational damage. The fix? A custom model retrained on sector-specific data sets.

Another risk is misalignment between AI capabilities and business goals. If KPIs aren’t well-defined upfront, you might end up automating low-value tasks. A logistics company once implemented AI to flag route disruptions but failed to tie alerts to decision-making protocols, rendering insights useless. When they integrated AI with dispatch operations, the system finally delivered value—reducing rerouting costs by 18%.

Additionally, a lack of transparency in AI decision-making can lead to mistrust, especially in regulated industries like finance and healthcare. Without explainability, compliance teams may resist adoption. Companies that adopt explainable AI (XAI) frameworks—where risk assessments come with rationale—see better stakeholder engagement and smoother audit approvals.

Finally, a poor change management strategy can stifle even the best AI solution. Resistance from risk analysts, fear of job loss, and insufficient training often reduce adoption rates. Companies that pair AI deployment with comprehensive training and cross-departmental pilots enjoy better outcomes—both culturally and financially.

Future Trends of AI in Risk Management

-

Predictions for the Next Decade

Over the next decade, AI in risk management will evolve toward autonomous, explainable systems. Probabilistic risk assessments—borrowed from aerospace and nuclear industries—will become standard in AI governance, enabling quantified risk pathways and evidence-based projections.

Hybrid modeling will combine structured rules and adaptive learning, minimizing both false negatives and false positives. Moreover, ESG and sustainability risk vectors—such as climate Hazards and supply chain vulnerabilities—will increasingly be integrated into AI risk platforms.

-

How Businesses Can Stay Ahead of the Curve

Organizations should adopt flexible architectures that incorporate explainable AI, continuous monitoring, and regulatory compliance. Investing in ethical frameworks—from data governance to model transparency—ensures preparedness for evolving standards.

Firms can stay ahead by participating in industry consortiums, adopting best practices from high-reliability sectors, and engaging in continuous skill development. Ultimately, blending AI with human judgment and strong governance will create resilient, risk-aware enterprises ready for future challenges.

Conclusion

-

Summary of Key Takeaways on AI Use Cases in Risk Management

AI is revolutionizing risk management through real-time fraud detection, insider threat prediction, environmental hazard monitoring, and compliance automation. Measurable ROI has been demonstrated: reduced false alerts (‑59%), faster response times (‑47%), and improved operational efficiency (+50%). But success depends on clean data, thoughtful pilots, and strong governance frameworks.

-

Call-to-Action for Businesses Considering AI Adoption

If you’re a risk or compliance leader, start with a small-scale pilot in a high-impact area—like fraud or insider monitoring. Define clear KPIs, invest in data readiness and governance, and partner with vendors offering explainable, compliant AI solutions. By starting methodically, addressing bias early, and embedding human oversight, you’ll unlock strategic value: reduced risk, greater efficiency, and sustainable resilience in the AI era.

Explore AI solutions tailored for risk management at AI & Machine Learning and take the first step toward transforming your business. The future of risk management is here—embrace it today.

References

- https://www.ibm.com/think/insights/ai-risk-management

- https://kpmg.com/ae/en/home/insights/2021/09/artificial-intelligence-in-risk-management.html

- https://www.mckinsey.com/capabilities/risk-and-resilience/our-insights/how-generative-ai-can-help-banks-manage-risk-and-compliance

- https://www.ey.com/en_gl/insights/assurance/why-ai-is-both-a-risk-and-a-way-to-manage-risk

- https://legal.thomsonreuters.com/blog/how-ai-can-help-you-manage-risks/

- https://www2.deloitte.com/content/dam/Deloitte/us/Documents/audit/us-ai-risk-powers-performance.pdf

- https://www.nist.gov/itl/ai-risk-management-framework